Project Temple

Reimagining the VR video browsing experience

THE JAUNT VR APP PROMISES

A HIGH QUALITY AND EXPANSIVE LIBRARY OF VR CONTENT PLAYABLE ACROSS PLATFORMS TO CONSUMERS AROUND THE WORLD

From Zion to Temple

Initially the app was known as “Zion” and would serve to showcase both the Jaunt One cinematic VR camera and the Jaunt Cloud Services stitching and publishing solution.

As popularity grew with VR, so did the ever expanding library of 360 videos. As a product “Zion” was faced with performance and architecture restraints, an input system that would not fully take advantage of the new VR controllers and an unscalable design for organizing and discovering the ever growing library of content.

JAUNT VR PROJECT TEMPLE

RESEARCH & DISCOVERY

JAUNT VR PROJECT TEMPLE

BROWSE

Use Depth to Give Context

One of the problems unique to VR is maintaining user context. A browse pattern that uses depth can help users understand where they are, how they got there, how to get back and where to go from here.

JAUNT VR PROJECT TEMPLE

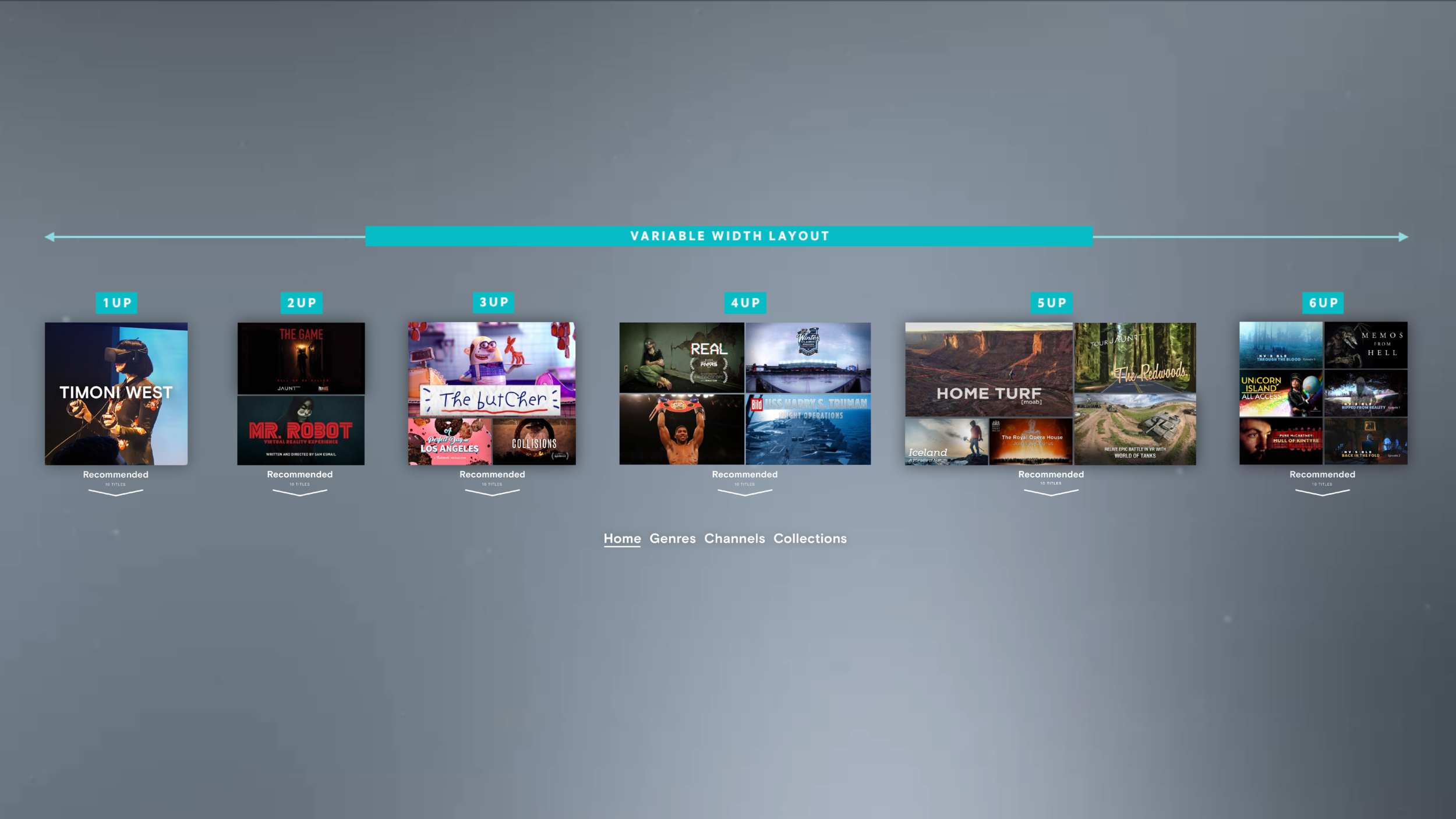

BROWSE BOARD SYSTEM

Server-Side Playlists

Publishing Managers have the ability to feature content and configure playlists from the Jaunt XR Platform’s Media Manager.

Playlist Types

Board, Channel & Title Playlists can have additional playlist types nested within them. This design affords for flexibility and scalability.

JAUNT VR PROJECT TEMPLE

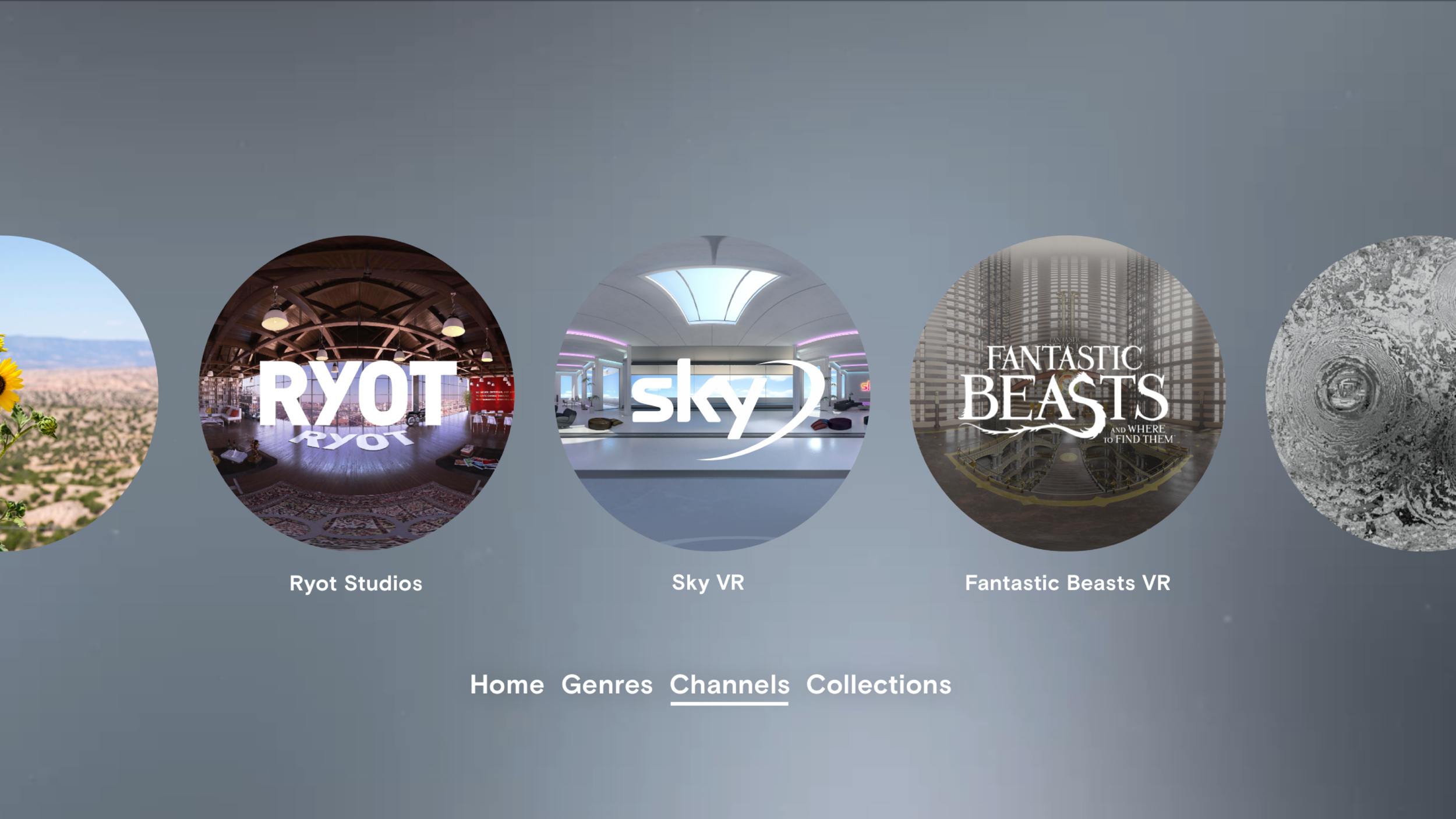

BRANDED CHANNELS

Branded Immersive Environments

Branded channels are dedicated areas that contain a channel’s content library, a branded immersive environment and provide a unique experience that can be customized by content and featuring.

OLYMPIC CHANNEL 360 ENVIRONMENT TOUR

JAUNT VR PROJECT TEMPLE

TITLE PREVIEW

Previews for User Discovery

Title Previews are used to promote user discovery. Each preview state provides additional types of information and interactivity to help quickly move the user from exploring to discovering titles they may like.

Title Preview States

State 1: The flat thumbnail creates the initial visual representation of a title in the board system.

State2: A hover state that quickly let’s the user discover additional information about the title such as a short meta description and look into an image from the title.

State3: A selected state with full description and additional meta information. A “play” and “download” prompt give the user actionable next steps.

Title Preview Assets

Flat Thumbnails provide a “poster” image as a standard 16:9 or (for featuring) a large 1:1 format.

Stereo Parallax Thumbnails display (on hover) a larger stereoscopic window that allows the user to peer into an immersive frame chosen from the title.

Stereo Preview Spheres let the user interact and “grab” a full spherical image from the title preview and view it from within the sphere. A comfortable transition allows the user to go from holding the smaller sphere at their controller to viewing it from inside a full spherical stereo environment.

JAUNT VR PROJECT TEMPLE

TITLE DOWNLOAD

Download Manager

The download system provides for high-quality downloads to the client through a cue type system. When a download is initiated by the user it spawns a “Downloads” playlist board in the “Home” area that is responsive to the number of titles in the playlist. A visual system provides clear user feedback by providing status and management of a downloaded title from the title preview panel. Primary and secondary controls are provided as needed with this design. Download status is also displayed on the title thumbnail wherever it appears within the system.

Download Flow

The title preview panel provides user control and feedback for downloads as well as an error-checking and notification system to remind the user when a title is downloaded or when network or download errors occur.

JAUNT VR PROJECT TEMPLE

PLAYER

The Jaunt Player

The core technology behind the Jaunt Player utilizes the XRP Player Engine SDK providing high-quality playback of content-adaptive streaming and monolithic format downloads across platforms.

A unique user experience is integrated within the player. A spherical object appears in depth containing the player control, language subtitle access and scrubbing transport features. The player sphere allows for the user to see nearly the full breadth of a 360 video within the user’s FOV. The scrubbing feature allows for the player sphere to display (in stereoscopic depth) the video within it and only when the scrubbing controls are released does the video environment sphere update. This overcomes any potential for user discomfort or disorientation.

A transition point for entering, exiting and pausing a video title is part of the unique player design. Transitions allow affordances for maintaining user context as well as providing a smooth transition in and out of a stereoscopic 360 video environment.

VIEW THE JAUNT PLAYER PROTOTYPE

JAUNT VR PROJECT TEMPLE

FINAL OVERVIEW

The Final Evolution

The Temple product launch brings all the pieces together after extensive iteration, prototyping and testing. Initially launched in Q4 2017, the new Jaunt VR consumer app “Temple” was featured at the Windows Mixed Reality platform launch after a month of testing and feedback with the WMR team. “Temple” was released on the Oculus, Vive and Sony Playstation VR platforms in Q1 2018.

JAUNT VR PRODUCT DESIGN EVOLUTION VIDEO

TEAM CONTRIBUTORS

Additional design contributions by Ethan Miller, Matthew Luther.

Unity & technical prototyping by Gregory Lutter, Matthew Luther.

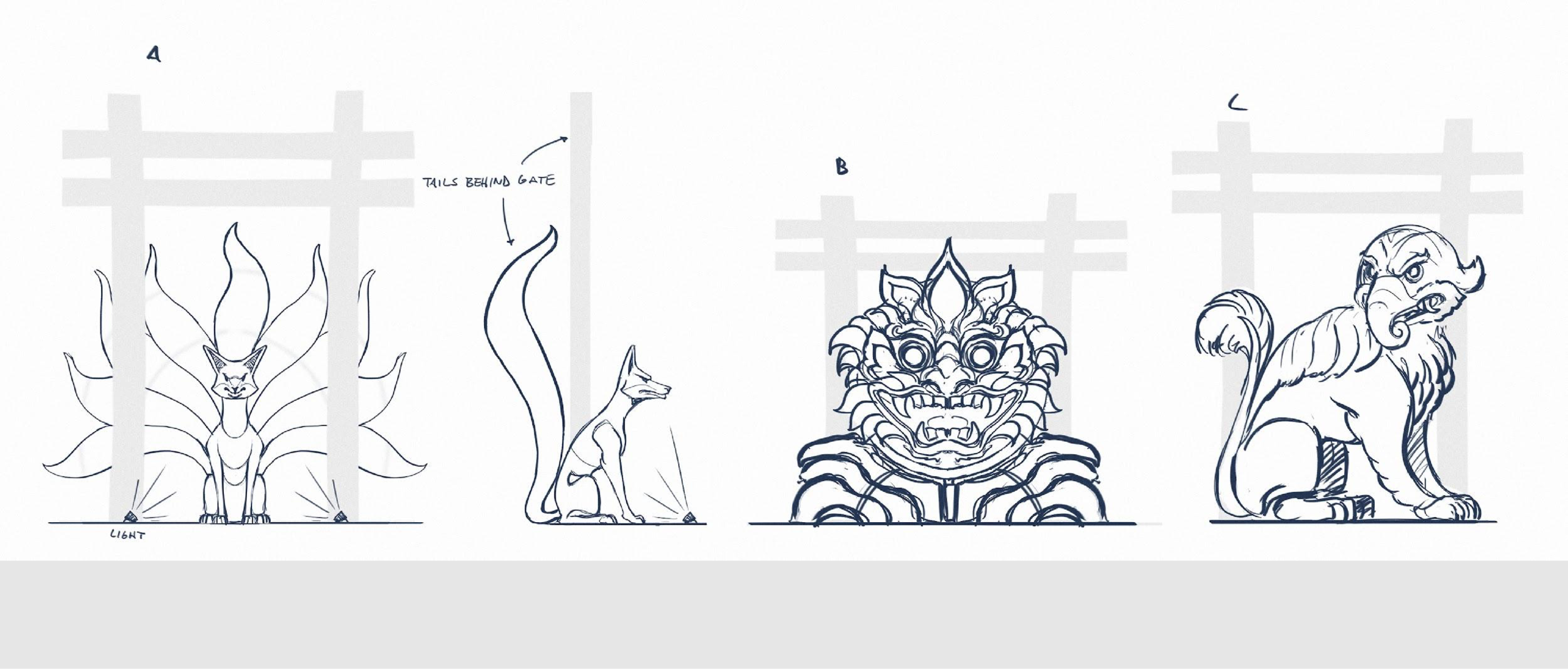

Concept Illustrations by Javier Lazlo.